A small matter of programming

We’re rebuilding ScraperWiki.

For three years, we’ve been helping people get, clean and analyse data on the web. Our key insight was that you need to write code to do that, and we should make writing that code as easy as possible.

Earlier this year, we realised that that isn’t enough.

ScraperWiki Classic, as we now call the original ScraperWiki, falls into a gap. It’s neither flexible enough for most programmers, nor simple enough for most non-programmers.

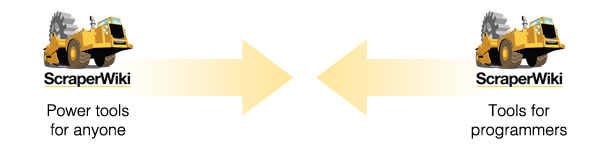

There’s a bunch of people who love it, right in the middle. Our plan was to push that sideways – add more features to make it useful to programmers, and add tools to make it useful to anyone.

We listened and watched our users and found it wasn’t going to work[1]. To make ScraperWiki useful to enough people now, we have to make it powerful and geeky enough for programmers, and, right at the other end, supply code-free tools that anyone can use straight away.

So that’s what we’re making. One unified platform that exposes the true power of a raw UNIX environment and a set of industry standards (like HTTP and SQL) for programmers; and that also, at the other end, builds on those foundations to give non-programmers access to a whole ecosystem of powerful, code-free, web-based tools for data science.

Everything is transparent, and every tool, no matter how simple, has a “view source” button so we can all get to, edit, and learn from the code underneath.

But more than transparency and code, ScraperWiki has always been about community. And that’s never going to change: By sharing one platform, the power-users can learn from the programmers, or use the tools they create, while the programmers can work with each other, and use the power-user tools to simplify the boring bits of coding.

If that sounds like your sort of data hub, stay tuned: We’ll be introducing the new ScraperWiki in the New Year.

[1] If you want to know more about the theory and practice of bringing “end-user computing” (letting people make their own custom apps) to everyone, I recommend Bonnie Nardi’s book A Small Matter of Programming.

Reuben’s said the diagrams above aren’t quite clear enough, so this comment is to try and explain it a bit more concretely…

Online code editors, and other innovations such as PaaS, make programming easier, and reduce systems administration. However, they don’t magically enable non-programmers to be able to program, that still requires months of work, and a personality that wants to be a programmer.

At the same time, having a very simplified online code editor with its own very simplified PaaS (backend for running the code) isn’t powerful enough for programmers. They complain that they want to use vim or TextMate, the Python debugger or git, Perl or Redis – each one has a different complaint.

There are a few people in the middle who really love such an easy to use online code editor – ScraperWiki Classic has a bunch of fans. However, that isn’t enough.

The new platform we’re making instead has general Unix shell accounts as its backend, so programmers can more easily deploy stuff to it. And has a web frontend to that with tools for anyone (more on this in later blog posts!), which still lets you get to the source code, and run arbitrary code over the web.

I really, really like the idea of ‘a whole ecosystem of powerful, code-free, web-based tools for data science.’ Keep up the good work.

Hi Joe! Julian is looking at getting all the UN data into that ecosystem. How is your project going?

Does this affect the now running platform? I just noted the scraperwiki platform to be defunct.

Michael – no changes to the running platform right now. The same code can be transported from one to the other – as the new platform matures, we intend to help everyone migrate over.

I have 18 scrapers on the old ScraperWiki. Does that mean that I can only migrate 3 of them to the new platform for free?

And how do I migrate them without copying the code manually?

We’ve set up a new guide that might help answer your questions, Suzana: https://scraperwiki.com/help/scraperwiki-classic – take a look!

You might also want to keep an eye on our blog in the coming week or two. We’ll be posting a lot more about the recent changes, including our plans for encouraging open data use, and the reasoning behind our current account limits.